I’m working on a project where testing is important. We had a predecessor project with the same customer, and testing was also considered important at that time. During the previous project, I had problems communicating with the test coordinator; for one thing, we put importance on wildly different things. In the current project, we have a dedicated tester, and I’ve figured out what the communication issue earlier was. Before we go into the details, let’s look at an example.

I’m working on a project where testing is important. We had a predecessor project with the same customer, and testing was also considered important at that time. During the previous project, I had problems communicating with the test coordinator; for one thing, we put importance on wildly different things. In the current project, we have a dedicated tester, and I’ve figured out what the communication issue earlier was. Before we go into the details, let’s look at an example.

Consider we have a service that grants car insurances. The rule for granting an insurance is: women can always get insurance, and men can get one if they are not young (as young men drive badly). How would one go about testing this? The approach taken by traditional testing is to identify logical test cases, which are descriptions of the combinations of parameters necessary to test the entire functionality. We definitely need a case with a young man and a case with somebody who is not a young man. Depending on how thorough we are, the second case can be further split into “a man but not young” and “a woman.” Each test case would include the expected outcome.

The approach I have been working with for roughly 10 years in academia is model-based testing. Here we don’t care about logical test cases. Instead we identify the important variables; in the example from before, we would focus on the gender of the applicant and their age. Instead of identifying the “important” combinations as when figuring out logical test cases, we just indiscriminately try all test cases.

The approach I have been working with for roughly 10 years in academia is model-based testing. Here we don’t care about logical test cases. Instead we identify the important variables; in the example from before, we would focus on the gender of the applicant and their age. Instead of identifying the “important” combinations as when figuring out logical test cases, we just indiscriminately try all test cases.

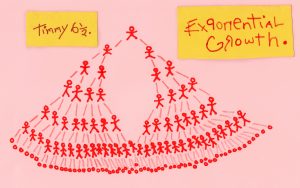

The problem with considering “all cases” is that their number can grow very large. In our example, the difference between the traditional method and my method is two or three logical test cases versus 2 * 2 = 4 test cases. The difference typically grows as the complexity grows. Assume we also want to test somebody on the border between old and young (assume people below 25 are considered young); now we have 3 possible values for age: < 25, 25, > 25. This leads to 2 * 3 = 6 test cases while we just add one more logical test case. If I add a variable such as driving history, which can take values like careful and reckless, we get one or two more logical cases, but the total number of cases grow to 2 * 2 * 3 = 12. As this number grows fast in practice (I believe I calculated a total of 144 cases in the practical example this is based on), it is understandable that traditional testing wants to reduce this to keep the testing load manageable.

The problem with considering “all cases” is that their number can grow very large. In our example, the difference between the traditional method and my method is two or three logical test cases versus 2 * 2 = 4 test cases. The difference typically grows as the complexity grows. Assume we also want to test somebody on the border between old and young (assume people below 25 are considered young); now we have 3 possible values for age: < 25, 25, > 25. This leads to 2 * 3 = 6 test cases while we just add one more logical test case. If I add a variable such as driving history, which can take values like careful and reckless, we get one or two more logical cases, but the total number of cases grow to 2 * 2 * 3 = 12. As this number grows fast in practice (I believe I calculated a total of 144 cases in the practical example this is based on), it is understandable that traditional testing wants to reduce this to keep the testing load manageable.

These days, however, we have magical computing machines called computers at our disposal. These room-size electronic brains love doing repetitive stuff like little electronic autists. A computer wouldn’t complain about having to perform 144 very similar tests. Or 1440, or 1440000. The only issue is that we need the computer to be able to compare the result of the test with a “known good” value. The computer can run the program, but needs to compare it with a value derived from the specification, not from the implementation.

These days, however, we have magical computing machines called computers at our disposal. These room-size electronic brains love doing repetitive stuff like little electronic autists. A computer wouldn’t complain about having to perform 144 very similar tests. Or 1440, or 1440000. The only issue is that we need the computer to be able to compare the result of the test with a “known good” value. The computer can run the program, but needs to compare it with a value derived from the specification, not from the implementation.

If a tester were to derive 144 correct values from a specification, we have just shifted the issue from running the 144 tests, which is simple enough you can just have an intern do it, to deriving the correct results from the specification. That’s something that requires a PhD or at least reading comprehension on par with a 12 years old.

The solution is again to have the computer derive the correct values. To do this, we need an executable specification, often called a model. The model can take many forms, such as a state chart, a flow chart, or a Petri net.

That finally leads us to our communication problem: while traditional testers were focusing on the hardest part of their approach, defining logical test cases, I was focusing on the hardest part of my approach, defining the variables and model. As the specification from the projects were quite good, the hardest part for me was by far defining in the variables, whereas specifying logical test cases was no issue: we’d just test all possible combinations of all variables, and the majority of time spent testing was setting up the automatic tests.

That finally leads us to our communication problem: while traditional testers were focusing on the hardest part of their approach, defining logical test cases, I was focusing on the hardest part of my approach, defining the variables and model. As the specification from the projects were quite good, the hardest part for me was by far defining in the variables, whereas specifying logical test cases was no issue: we’d just test all possible combinations of all variables, and the majority of time spent testing was setting up the automatic tests.

I have two very large issues with spending time on specifying logical test cases: it is ridiculously hard and time-consuming to do, and even if you do it correctly your tests are inferior. Let’s address each of these.

Story time: I once co-authored a paper about estimating population sizes based on genealogy. Co-authored is being generous, I just did a bit of the technical work and proof-read the paper. The actual author had posed the problem of describing all possible genealogies to a couple master students. They spent a long time, and finally presented 11 possibilities. A PhD student reviewed their work, and added another two possibilities, and finally the originator added one more genealogy before growing tired of doing this by hand and just deciding to have a computer do it. Writing the specification and having the computer derive all possibilities took minutes, and the computer came up with the correct answer of 15 (adding one to all the previous) in less than a second. And the computer description could be generalized to do the same task for more chromosome pairs than the two originally used.

The exact same issues arise when deriving logical test cases: it is ridiculously hard to correctly identify all of them. In our project, each time I have looked at some test cases or results, I have suggested adding 1-2 new test cases. I’ve stopped looking, because I’m certain that if I do, our tester will have to add another test case. Our tester similarly included a case or two I hadn’t considered despite having written the implementation. And if somebody else reviews the test suite after our tester has added all his cases, and I have added all my cases, they will likely identify more cases. All of this takes an incredible amount of time, and it is almost impossible to know when you’re done (just because another person hasn’t found another case doesn’t mean there’s no more, just that that person couldn’t come up with it). At the end of the day, testing like this tends to be come proof by exhaustion, where testing stops once 15-20 test cases are reached because nobody can keep track of missing cases anymore.

The exact same issues arise when deriving logical test cases: it is ridiculously hard to correctly identify all of them. In our project, each time I have looked at some test cases or results, I have suggested adding 1-2 new test cases. I’ve stopped looking, because I’m certain that if I do, our tester will have to add another test case. Our tester similarly included a case or two I hadn’t considered despite having written the implementation. And if somebody else reviews the test suite after our tester has added all his cases, and I have added all my cases, they will likely identify more cases. All of this takes an incredible amount of time, and it is almost impossible to know when you’re done (just because another person hasn’t found another case doesn’t mean there’s no more, just that that person couldn’t come up with it). At the end of the day, testing like this tends to be come proof by exhaustion, where testing stops once 15-20 test cases are reached because nobody can keep track of missing cases anymore.

A big problems is that even after spending all this time coming up with the perfect set of test cases, you make assumptions about the implementation that might not be correct. Logical test cases for the insurance example could be “young man is denied,” “old man is granted” and “woman is granted.” As we have to actually execute the tests, we have to assign an age to the woman in the last test case, but which age should we use?

Suppose we have implementations that look roughly like this:

[java] boolean grantInsurance1(int age, Gender gender) {if (gender == WOMAN) return true;

if (age <= 25) return false;

return true;

}

[/java] [java] boolean grantInsurance2(int age, Gender gender) {

if (age <= 25) return false;

if (gender == WOMAN) return true;

return true;

}

[/java]

Which one of these is correct and which one is wrong? Depending on which age is assigned to the woman in the last test case, our test suite would not necessarily recognize this. Since the age isn’t specified, nothing prevents the tester from randomly putting in 30 (they might reasonably just be copying the previous test “old man is granted” which they just finished setting up). Now the test-suite would say that both implementations are correct, because the “irrelevant” test case “young woman is granted insurance” isn’t part of the suite.

If you base your tests on the implementation, you can make sure that the “correct” logical cases are picked, but then your test suite is not very useful for regression testing as some programmer might refactor the correct grantInsurance1 into the incorrect grantInsurance2 as part of an unrelated change. This example is of course a bit artificial to make it more understandable, but more subtle variations of the above can very easily happen in reality.

Errors slip thru in parts that hasn’t been tested (this will probably come as a surprise to nobody at all), and by focusing on the “important” logical test cases you by definition don’t test everything. This is especially important when dealing with security issues: security issues most often happen when a user does something that “nobody would ever do.” The code doesn’t take this behavior into account, and the tests take it even less into account. For example, one might a security issue that would allow me to withdraw a negative amount from my bank account (decrementing my balance by a negative amount – incrementing it – and failing in the payout of the negative amount). No logical test case would catch this because it is non-specified and nonsensical behavior.

Errors slip thru in parts that hasn’t been tested (this will probably come as a surprise to nobody at all), and by focusing on the “important” logical test cases you by definition don’t test everything. This is especially important when dealing with security issues: security issues most often happen when a user does something that “nobody would ever do.” The code doesn’t take this behavior into account, and the tests take it even less into account. For example, one might a security issue that would allow me to withdraw a negative amount from my bank account (decrementing my balance by a negative amount – incrementing it – and failing in the payout of the negative amount). No logical test case would catch this because it is non-specified and nonsensical behavior.

With these arguments, it is clear why I prefer the model-based testing approach. This approach does have significant issues, though. The most important is that one has to understand and trust the approach. If not, there is way too much magic happening. I can sympathize with the difficulty of grasping how identification of input variables specifies a test case to somebody used to having to explicitly writing out logical test cases. Even more, I can understand that it can be hard to trust the model to always generate the correct answers – how is the model not just another implementation. Well, the model IS just another implementation, and the goal is to make the extra implementation so “obviously correct” one will trust it.

For this reason, we have a dedicated tester now. He speaks the same language as the test managers. I am trying to detach myself as much as possible from the testing, because I know of the weaknesses of the best-practice. At the same time, I now understand why they had and have issues with my approach.

For this reason, we have a dedicated tester now. He speaks the same language as the test managers. I am trying to detach myself as much as possible from the testing, because I know of the weaknesses of the best-practice. At the same time, I now understand why they had and have issues with my approach.

This is not the end, though. This is literally the problems my PhD thesis was about (and with the 10 year anniversary of me handing that thing in, it is sure to still be relevant 🙂 ), so I have devised a design for a tool that I’ll try implementing to try and convince the people responsible for testing of the benefits of my approach. And perhaps provide tooling that will make testing simpler in the future. But that’s a topic for another post – this one has already gone on for long enough.